Did you know that your Australia Business Number of ABN can be cancelled by the tax office? There are only a few reasons this can happen, and one of them stems from your business’ lack of profitability. Another reason is whether the ATO deems your business to be a hobby as opposed to an enterprise. Violations and a mistakenly issued ABN are the other common reasons.

A Look at Unprofitability and Hobby versus Enterprise Cancellations

A February 20, 2007 judgment by the president of the Administrative Appeals Tribunal highlights one case in which your ABN can be cancelled due to lack of profitability. The conflict arouse when the defendant’s ABN was cancelled in 2000 because it was believed that he was no longer operating a business or had any hope of gaining a profit.

The business owner was adamant that he operated until late 2004 and, based on the proof presented, the tribunal agreed that he did in fact operate an enterprise. It was also decided that he had reasonable assumptions that a profit was possible; however, he did not make one so the ABN was still cancelled. The difference is the date of cancellation was made effective as of 2004 instead of 2000.

How to Avoid This

While there is no set formula to growing your business, a sound business plan can help you analyze profitability before you start. Additionally, running your business efficiently will help you to spend less while getting the best out of each dollar. Plus, you are more likely to get the funding you need to make necessary changes if you can demonstrate a viable plan and effective management.

How Your Bookkeeper Can Help Avoid This

A positive cash flow is one way to improve the outlook of your company because it means you have enough to survive from day to day. While this may not be outright proof that your business is profitable, it does mean that it is not in immediate distress. Furthermore, careful watch over your cash flow will help you to see what is wrong, and will give you a better chance to fix it, which can lead to greater efficiency and the improvements you need to grow. These are just some of the reasons to practice good bookkeeping.

In fact, a competent bookkeeper can help you to separate your assets from your actual liability and show you how to save money. Plus, organized financial statements and reports allow you to immediately spot if you are making a profit or a loss. At the very least proper bookkeeping will help you see how close you are to, or how far you are from, your breakeven point.

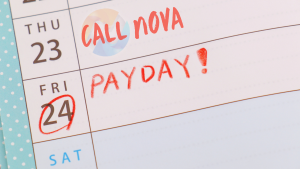

The Benefit of Outsourcing

Growing a business takes time and vigilance; outsourcing your bookkeeping tasks will therefore allow you focus on other things. Best of all, you get the services you need from a competent professional who will not add to your overhead costs. We cannot make any promises or predictions about the future of your company, but we can offer you the best in bookkeeping services to help you get ahead. Contact us now.